Markov Chains Tutorial #5 © Ilan Gronau. Based on original slides of Ydo Wexler & Dan Geiger. - ppt download

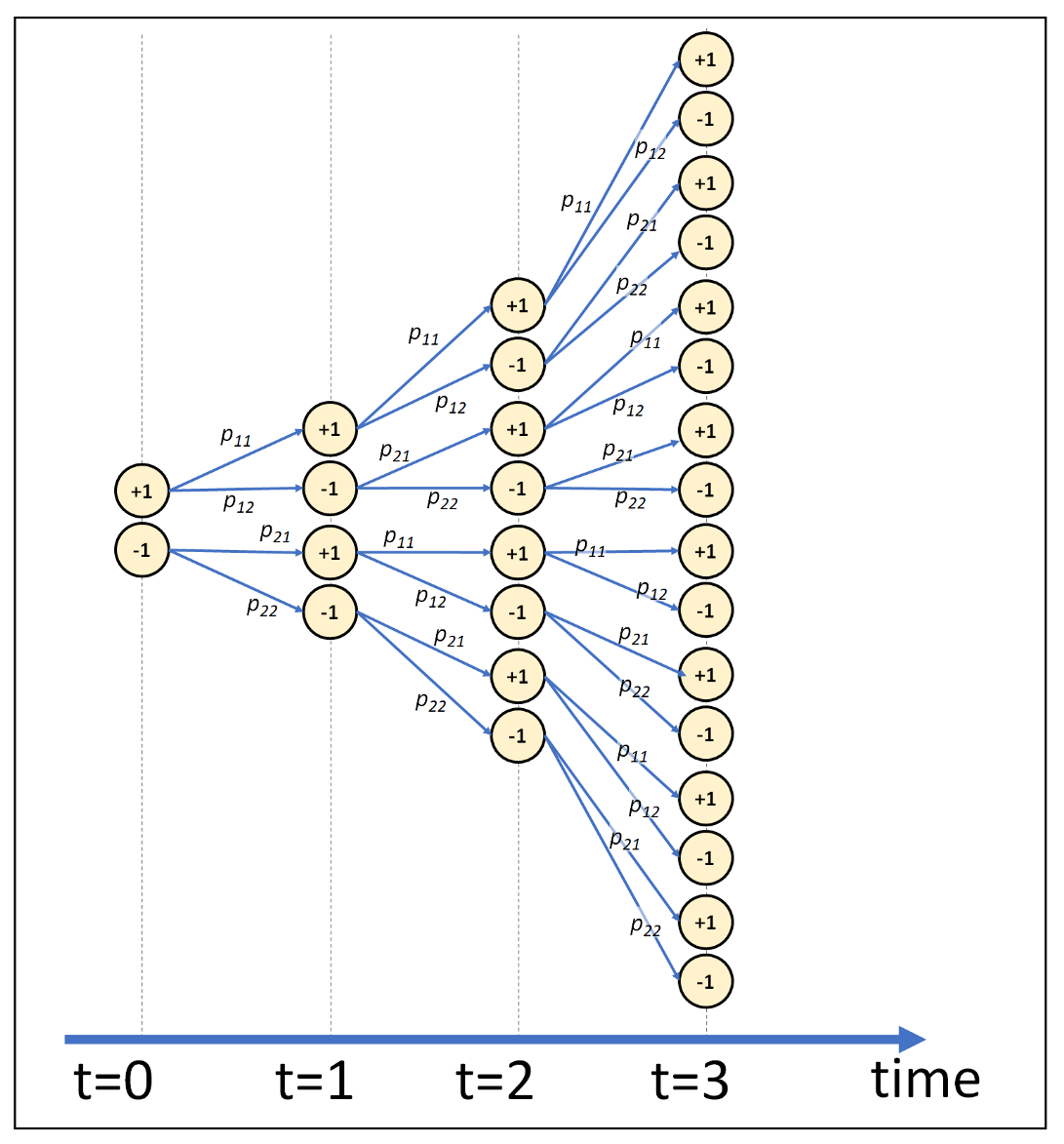

Markov chain representation of a Markov process and 2-state model fit... | Download Scientific Diagram

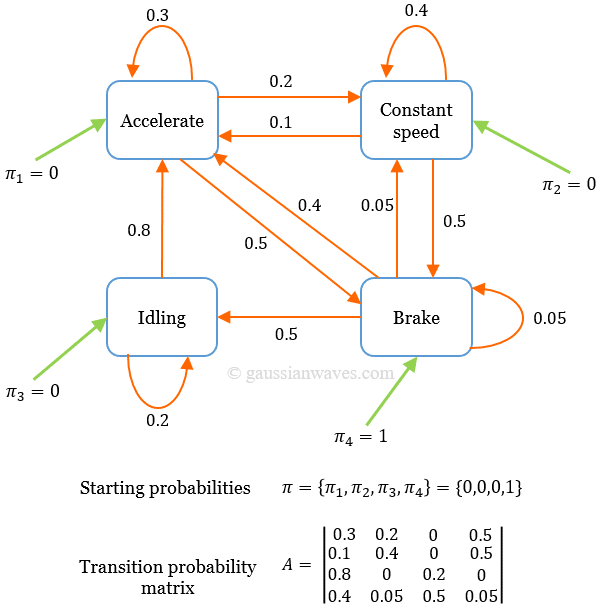

Markov Models Charles Yan Markov Chains A Markov process is a stochastic process (random process) in which the probability distribution of the. - ppt download

probability - In M/M/1 Markov process, why must entering and leaving the zero state be equal? - Mathematics Stack Exchange

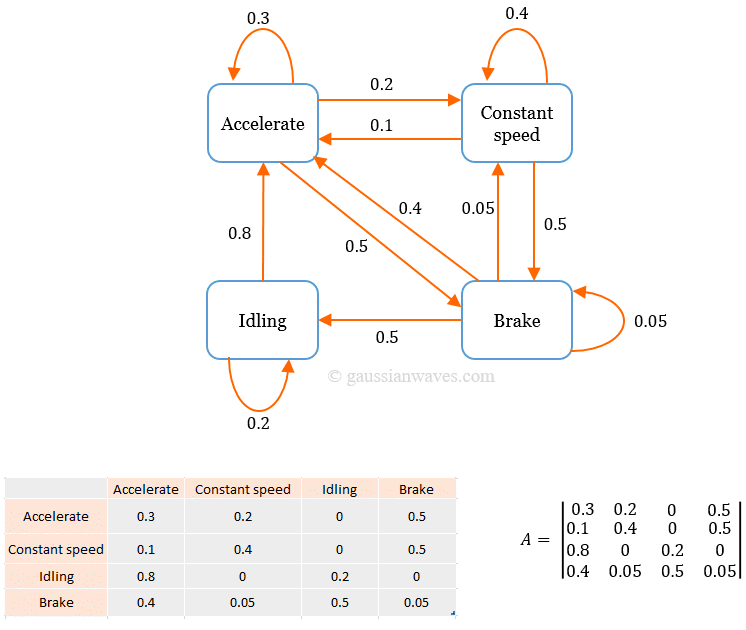

Reinforcement Learning Basics: Understanding Stochastic Theory Underlying a Markov Decision Process | by Shailey Dash | Towards Data Science

DAGs illustrating a Markovian/non-Markovian chain. A DAG with (a) three... | Download Scientific Diagram

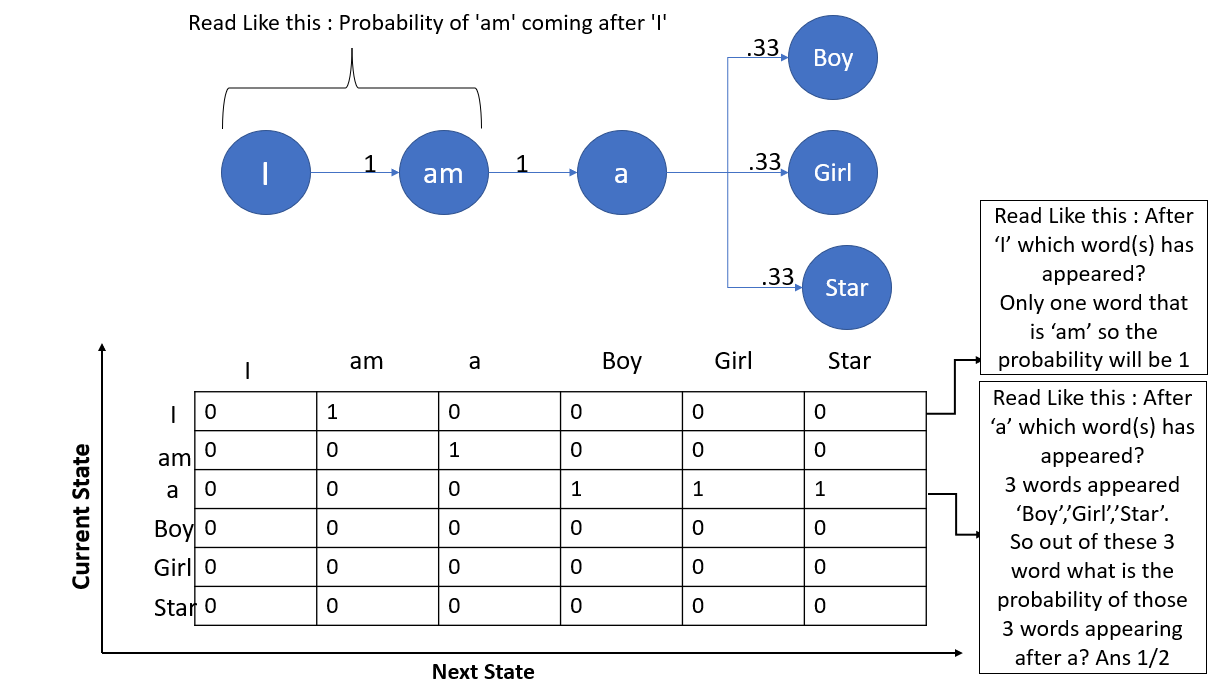

Introduction to Markov Chains. What are Markov chains, when to use… | by Devin Soni | Towards Data Science

![Markov Chain Attribution Modeling [Complete Guide] - Adequate Markov Chain Attribution Modeling [Complete Guide] - Adequate](https://adequate.digital/wp-content/uploads/2019/07/Markov-Chain-Attribution-Modeling-6.png)